TL;DR:

- Reducing EMS response times is vital, as delays directly impact patient survival during cardiac arrests.

- Municipal leaders must accurately measure and analyze response time metrics, focusing on fractile data to identify outliers.

- Implementing targeted system redesign, community engagement, and leadership accountability ensures sustained improvements in emergency response performance.

Every minute a cardiac arrest patient waits for an EMS unit to arrive reduces the chance of survival. For Connecticut municipal leaders, that reality frames every conversation about EMS performance. Understanding exactly how to measure, interpret, and act on response time data is not an administrative exercise. It is a direct commitment to community health outcomes. This guide provides a structured, practical path through the metrics, methods, and strategies you need to identify gaps and drive measurable improvement in your municipality’s EMS system.

Table of Contents

- Understanding EMS response time metrics in Connecticut

- Gathering and preparing EMS response data

- Analyzing EMS response times: Essential methods and tools

- Troubleshooting response time analysis: Common pitfalls and solutions

- Turning data into action: Improving EMS response in your municipality

- What most analyses miss: The real levers for EMS impact

- Partner with experts for EMS system improvement

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Standardize your data | Always use NEMSIS-compliant data and definitions for consistent, comparable EMS response analyses. |

| Watch for hidden delays | Clarify your response time intervals and correct for start/stop measurement disputes to avoid performance misinterpretation. |

| Leverage technology | Implement GIS mapping, dashboards, and data visualization for resource allocation and transparent improvement. |

| Prioritize actionable steps | Focus on changes that address real community needs over expensive, isolated fixes for sustainable EMS improvement. |

Understanding EMS response time metrics in Connecticut

Before you can improve response times, you need to know precisely what you are measuring. Connecticut’s Office of Emergency Medical Services (OEMS) relies on NEMSIS data standards to define and collect EMS response time data, specifically versions 3.4 and 3.5, analyzing average and fractile response times by town, call class, and mode of response, with detailed tables that break down delays by distance, traffic, and weather factors.

The NEMSIS standard defines two critical intervals you must track separately:

- Unit response interval: The time in minutes between dispatch notification (data element eTimes.03) and the unit’s arrival on scene (eTimes.06). This is what most people mean when they say “response time.”

- System response interval: The full time from the initial emergency dispatch to unit arrival on scene, which includes any pre-assignment delays in the communication center.

Understanding the difference between average and fractile response times is equally important. Average response time tells you what a typical call looks like, but it can mask serious outliers. Fractile response time, often expressed at the 90th percentile, tells you that 90% of calls were answered within a specific time threshold. Most national standards, including those from the National Fire Protection Association (NFPA), rely on fractile measurements because they expose the worst-performing portion of your call volume.

| Metric | What it measures | Best used for |

|---|---|---|

| Average response time | Typical performance across all calls | Trend comparison over time |

| 90th percentile (fractile) | Worst-case performance for 90% of calls | Benchmarking and accountability |

| 95th percentile | Near-worst-case performance | High-acuity call standards |

Connecticut OEMS annual reports segment response delays by contributing factors at the town level, giving municipal leaders a ready-made starting point for local benchmarking. Many towns are surprised to discover how much their fractile numbers diverge from their averages once delay categories like rural distance and peak traffic hours are separated from the overall data.

Pro Tip: Always request both the average and 90th percentile figures from your EMS agency or dispatch center. If your agency only reports averages, you are likely missing the calls that matter most for policy decisions.

Reviewing EMS best practices for Connecticut municipalities will help you contextualize these metrics within broader system design and operational standards.

Gathering and preparing EMS response data

With clear definitions established, the next step is collecting and organizing accurate data within your jurisdiction. Municipal leaders often discover that the data exists but is scattered across multiple systems with inconsistent formatting.

Your primary data sources should include:

- NEMSIS data exports from your EMS agency’s patient care reporting (PCR) software

- Connecticut OEMS annual reports, which provide town-level response data using NEMSIS v3.4/3.5 standards

- Local Computer-Aided Dispatch (CAD) logs from your regional or local dispatch center

- Mutual aid records, which are frequently omitted but critically important for towns relying on neighboring jurisdictions

Data quality is the single most common barrier to useful analysis. To ensure comparability across time periods and agencies, every record should conform to NEMSIS v3.4 or later formatting. Records missing eTimes.03 (dispatch notification) or eTimes.06 (arrival on scene) cannot be used to calculate unit response intervals and must be flagged and investigated. A missing timestamp is not simply a blank field. It often signals a training gap, a software configuration problem, or a documentation habit that needs correction.

“If your PCR data has more than 5% of records with missing response time fields, your performance reports are not telling you the full story. Fix the data before you fix the system.” — PSCG operational guidance

Common data pitfalls to address before analysis:

- Inconsistent event coding that mislabels call priority classes

- Dispatch logs that record assignment acceptance rather than initial dispatch notification

- Weather and traffic data not captured in discrete fields, making it impossible to isolate delay causes

- Mutual aid calls recorded in one agency’s PCR but not the other’s

Reviewing EMS system design examples can help your team understand what well-structured data collection looks like in practice. For municipalities starting a formal review process, documenting your system assessment steps before touching the data will save significant rework downstream.

Analyzing EMS response times: Essential methods and tools

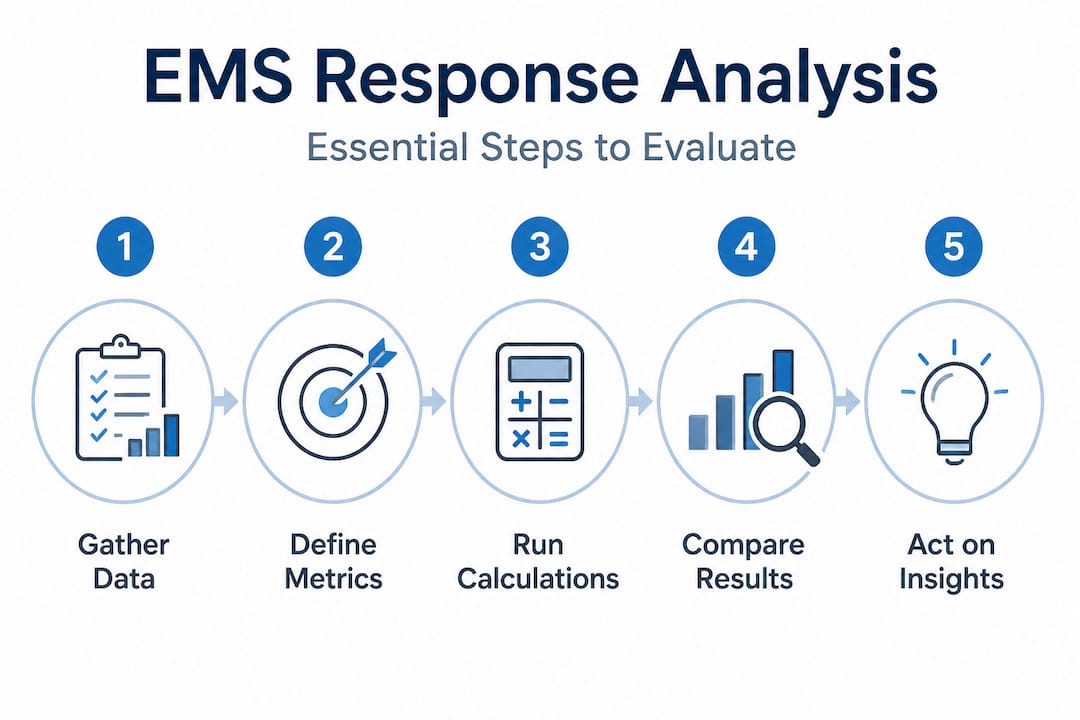

Once your data is clean and properly formatted, you are equipped to extract actionable insights. The following stepwise process provides a reliable framework for municipal analysis.

- Clean the data. Remove or flag records with missing timestamps, duplicate incident entries, and calls that fall outside the scope of your analysis period. Document every exclusion decision.

- Calculate core metrics. Compute the mean response time, median, 90th percentile, and 95th percentile for your full call volume. Segment by call priority class, time of day, geographic zone, and day of week.

- Detect outliers. Identify calls where unit response intervals exceed two standard deviations from the mean. Each outlier deserves a root cause review. Was the delay due to distance, unit unavailability, or a communication error?

- Benchmark against standards. Compare your results to state averages from OEMS reports and national benchmarks such as NFPA 1710 for career departments.

- Visualize distribution. Use histograms or cumulative frequency tables to see how response times are distributed. A tight distribution signals consistency. A long right tail reveals systemic problem areas.

The NEMSIS unit response interval definition is the correct standard for this analysis, measuring minutes between dispatch notification and arrival on scene, and it should be your baseline regardless of how your agency has historically reported performance.

| Comparison point | Unit response interval | System response interval |

|---|---|---|

| Start point | Dispatch notification (eTimes.03) | Initial call receipt |

| Captures | Unit travel and preparation time | Full communication center + unit time |

| Policy implication | Unit deployment decisions | Dispatch center staffing decisions |

| Benchmarking standard | NEMSIS, OEMS | Municipal contracts |

Understanding deployment models is essential at this stage because the same response time number can mean very different things depending on whether your system uses static posting, System Status Management (SSM), or a hybrid approach. Similarly, reviewing strategies for optimizing response models will inform how you translate analysis findings into resource decisions.

Pro Tip: Run your 90th percentile calculation separately for high-priority calls (ALS emergencies) and lower-acuity calls. Blending all call types into a single figure often hides the fact that your most critical patients are waiting the longest.

Troubleshooting response time analysis: Common pitfalls and solutions

Knowing how to run the analysis is only useful if you avoid the interpretation errors that consistently distort findings. Municipal leaders in Connecticut face several recurring problems when reviewing EMS response data.

The start time dispute is perhaps the most consequential issue. City-reported data often includes pre-assignment delays, meaning the clock starts at the moment the call enters the dispatch queue. NEMSIS and OEMS standards, by contrast, start the unit response interval at dispatch notification to the unit, which excludes the time the communication center spends finding an available unit. This discrepancy directly impacts perceived performance, and it explains why a municipality and its EMS provider can look at the same calls and report very different response time numbers.

Common pitfalls and their solutions:

- Ignoring incomplete records: Partial records skew your averages downward because failed documentation tends to cluster around difficult, longer calls. Audit records with missing fields before finalizing any report.

- Failing to segment by geography: A rural town in eastern Connecticut has structurally different response characteristics than a dense urban center. Comparing them without adjustment is not meaningful analysis.

- Overlooking weather and traffic normalization: Connecticut OEMS reports specifically segment delays by contributing factors. If your internal analysis does not do the same, you will misattribute operational failures to conditions outside your control.

- Using a single metric for all decisions: No single number tells the complete performance story. Combine average, fractile, and distribution data for sound conclusions.

“The start time used in your response time calculation changes everything. Before drawing any conclusions, confirm whether your data starts at call receipt, dispatch notification, or unit assignment acceptance. These are not interchangeable.”

Pro Tip: Request a side-by-side comparison from your dispatch center and EMS provider using the same incident records. If the numbers disagree, the methodology difference is the first problem to solve. Quality improvement in EMS starts with data integrity, not performance targets.

Turning data into action: Improving EMS response in your municipality

Once errors and pitfalls are addressed, municipal leaders can focus on translating findings into real improvements. The good news is that Connecticut’s regulatory and planning environment supports a data-driven approach at the local level.

GIS-based tools allow your team to overlay response time data on geographic maps, identifying specific zones where unit response intervals consistently exceed acceptable thresholds. Geographic Information System (GIS) analysis supports Primary Service Area (PSA) boundary review, ambulance posting location optimization, and mutual aid trigger decisions. The Connecticut State EMS Plan 2023-2028 specifically highlights the use of GIS for PSA boundaries and ambulance placement, along with proposed dashboard transparency measures under Senate Bill 238 to enable geography and call-type analysis for resource allocation.

Key action strategies for Connecticut municipalities:

- Deploy response time dashboards that update weekly or monthly, segmented by town zone, call priority, and time of day

- Review PSA boundaries against current population density and call volume maps annually

- Establish formal mutual aid triggers based on unit response interval data, not informal judgment

- Engage community stakeholders with public-facing performance summaries to build transparency and trust

- Set fractile targets in EMS contracts and measure provider performance against those targets quarterly

| Approach | Data requirement | Timeline for impact |

|---|---|---|

| GIS posting optimization | 12 months of geo-coded CAD data | 3 to 6 months |

| Dashboard reporting | NEMSIS-compliant PCR exports | 1 to 3 months |

| PSA boundary revision | Call volume, population, travel time | 6 to 12 months |

| Mutual aid protocol update | Response interval data by zone | 1 to 2 months |

Pro Tip: When presenting response time data to your board of selectmen or town council, lead with fractile results and geographic maps rather than system averages. Visual distribution data communicates urgency far more effectively than a single average figure. Explore consulting strategies for public safety to see how other Connecticut municipalities have structured similar improvement initiatives.

What most analyses miss: The real levers for EMS impact

We work with municipal leaders across Connecticut who have invested considerable effort in pulling data, building dashboards, and conducting performance reviews, only to find that response times improve modestly or not at all. The reason is usually not the data. It is the absence of sustained leadership commitment and structural change.

Data gives you a diagnosis. It does not provide the will to act on it. In our experience, the municipalities that achieve lasting improvement share three characteristics: their leadership treats EMS response time as a governance issue, not just an operational metric; they hold providers and dispatch centers accountable through contract language rather than informal conversations; and they involve the community in setting expectations, not just receiving outcomes.

There is also a persistent tendency to chase technology solutions before fixing fundamental system design problems. New dispatch software, GPS tracking tools, and predictive posting algorithms are all potentially valuable. But they amplify whatever system you already have. If your PSA boundaries are outdated, your mutual aid protocols are vague, or your data collection has gaps, technology investments will not close the performance gap. The concept of smart growth in EMS reflects exactly this principle: structural redesign, leadership accountability, and community integration produce better outcomes than isolated technology purchases.

The most impactful lever is almost always the one that costs the least: clear, consistent measurement combined with the organizational discipline to act on what the numbers reveal. That combination is harder to build than any software platform, but it is the only thing that produces durable results.

Partner with experts for EMS system improvement

Connecticut municipalities ready to move from analysis to action benefit most from working with partners who understand both the technical requirements and the local realities of EMS system performance.

At PSCG, we work alongside your leadership team to close the gap between data and results. Whether you need a structured EMS strategy guide to frame your improvement process, expert support for strategic planning that aligns EMS goals with municipal budgets, or hands-on system design consulting to restructure how your community delivers emergency response, we bring proven frameworks and Connecticut-specific knowledge to every engagement. Contact us today to start building a measurable, accountable EMS improvement program for your community.

Frequently asked questions

What counts as an EMS response time in Connecticut?

EMS response time is defined from dispatch notification to the unit’s arrival on scene, following NEMSIS standards using data elements eTimes.03 and eTimes.06. This unit response interval is the standard used by Connecticut OEMS for performance reporting.

How can municipalities access reliable response time data in Connecticut?

Municipalities should use Connecticut OEMS annual reports alongside NEMSIS-compliant local data exports for standardized, comparable results. Requesting CAD logs and PCR exports that match NEMSIS v3.4 or later formatting ensures your data is analysis-ready.

What are common issues with interpreting EMS response time data?

Confusion most often arises from the start time used in the calculation, since city dispatch data may include pre-assignment delays that NEMSIS and OEMS standards exclude. This single difference can make the same system appear to perform very differently depending on whose numbers you are reviewing.

How can Connecticut towns use response time analysis to improve EMS?

Use GIS and dashboards to identify delay patterns by geography and call type, then adjust ambulance posting locations and PSA boundaries accordingly. Transparent public reporting tied to fractile targets gives both providers and residents a clear standard for accountability.