TL;DR:

- Una answered 911 call within 30 seconds is critical for public trust and effective emergency response.

- Structured assessments identify operational gaps across personnel, technology, protocols, and facilities to improve performance.

When a 911 call goes unanswered within the first 30 seconds, or when a dispatcher lacks the protocol clarity to triage a cardiac arrest correctly, the consequences reach far beyond a delayed response time. Communities lose trust, outcomes worsen, and municipal leaders find themselves defending operational failures that a structured assessment could have prevented. For public safety directors, mayors, and EMS administrators, the emergency communications center is not simply a call-answering function — it is the operational foundation upon which every field response is built. This article walks you through a practical, step-by-step framework for assessing your communications center, identifying performance gaps, and implementing improvements that produce measurable results.

Table of Contents

- Understanding the assessment purpose and scope

- Preparation: Gathering essential requirements and resources

- Step-by-step communications center assessment process

- Common mistakes and troubleshooting during the assessment

- Verification: Interpreting results and implementing improvements

- Expert perspective: Why conventional wisdom can hinder communications center performance

- Transforming your municipality’s emergency communications

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Assessment scope matters | Defining which areas to evaluate ensures comprehensive improvements rather than isolated fixes. |

| Preparation prevents delays | Structured preparation, including gathering documents and key staff, shortens the assessment process and avoids missed details. |

| Balance speed with precision | Optimizing dispatch logics means weighing both rapid response and accurate medical assessment. |

| Troubleshoot common pitfalls | Identifying frequent mistakes, such as overlooking interoperability or relying solely on technology, helps leaders deliver actionable results. |

| Leadership drives improvement | Lasting performance changes depend on strong leadership and strategic collaboration, not just technology upgrades. |

Understanding the assessment purpose and scope

With the stakes established, let’s clarify what a thorough assessment should accomplish and what it should cover.

An emergency communications center assessment is not a routine audit. It is a structured evaluation designed to identify operational gaps, measure performance effectiveness, and produce a prioritized roadmap for improvement. Our communications center consulting insights reinforce that municipalities often underestimate how many interconnected components require simultaneous evaluation. Pulling on one thread without understanding the whole fabric leads to piecemeal improvements that don’t hold.

The scope of a thorough assessment covers several critical domains:

- Personnel: Staffing ratios, training records, certification currency, and shift fatigue patterns

- Technology: CAD systems, radio interoperability, backup systems, and software integration

- Protocols: Call handling procedures, dispatch logic, priority dispatch systems, and quality assurance workflows

- Facility readiness: Physical infrastructure, redundant power systems, ergonomics, and COOP (Continuity of Operations Plan) compliance

Research confirms that organizational structure matters enormously here. Two-level filtering systems impact QS30 variably depending on how the center is configured. In one emergency medical communications center (EMCC) studied, answered calls at 30 seconds improved to 100% after structural changes, while another EMCC experienced a slight decline under an identical system. This finding challenges the assumption that a single structural solution works universally.

You should also think carefully about when to conduct an assessment. The most common triggers include:

| Trigger event | Urgency level | Recommended action |

|---|---|---|

| Major incident or near-miss | High | Immediate review within 60 days |

| Technology upgrade or CAD migration | High | Pre- and post-implementation assessment |

| Significant staffing changes | Medium | Assessment within 90 days |

| Routine 2 to 3 year cycle | Baseline | Scheduled, structured review |

| Legislative or compliance changes | Medium | Gap analysis against new standards |

Our system assessment steps outline how triggering events should shape the depth and focus of each evaluation, ensuring that you’re not applying the same scope to a routine review that you would to a post-incident investigation.

Preparation: Gathering essential requirements and resources

Once you understand the scope, it’s time to gather what you’ll need for a structured assessment.

Preparation is where most municipalities lose ground before the assessment even begins. Incomplete document sets, misidentified subject matter experts, and inaccessible historical data all create blind spots that skew your findings. Before your assessment team enters the communications center, the following materials must be in hand:

- Standard Operating Procedures (SOPs) and their revision dates

- Shift logs from at least the past 12 months

- Call volume data segmented by time of day, call type, and agency

- Quality Assurance (QA) review records and any documented performance improvement plans

- Technology system architecture diagrams and vendor support contracts

- Personnel records covering training, certifications, and performance evaluations

Identifying the right roles is equally important. Your assessment team should include a lead assessor with direct communications center experience, an IT specialist who understands the CAD and radio infrastructure, at least one senior dispatcher who can validate protocol accuracy, and an external consultant who brings objective perspective without internal organizational bias. Your emergency management consulting partner can help structure this team configuration to avoid conflicts of interest or incomplete coverage.

The data table below outlines what each team member should be responsible for gathering:

| Role | Primary responsibilities | Data sources |

|---|---|---|

| Lead assessor | Protocols, SOPs, call handling quality | QA records, incident reports |

| IT specialist | CAD, radio systems, backup infrastructure | System logs, vendor contracts |

| Senior dispatcher | Procedural accuracy, training gaps | Shift logs, daily activity reports |

| External consultant | Objective benchmarking, gap analysis | National standards, peer comparisons |

| Compliance officer | Federal and state regulatory alignment | APCO, NENA, local mandates |

Contrasting viewpoints research shows that organizational structure can drastically change outcomes. A center that improves answered call rates to 100% under a two-level filtering system while a comparable center declines under the same model tells us that preparation must account for the unique structure of your center, not a generic template.

When building your checklists, cross-reference them against current interoperability standards and dispatch center consulting guidelines to make sure you’re not measuring against outdated benchmarks.

Pro Tip: Before the assessment begins, request three months of raw call data and run a simple frequency analysis. Patterns in call volume spikes, after-hours incidents, and repeat caller types will focus your assessment energy on the highest-impact areas rather than spreading effort evenly across all functions.

Step-by-step communications center assessment process

With resources in place, let’s walk through the step-by-step assessment process.

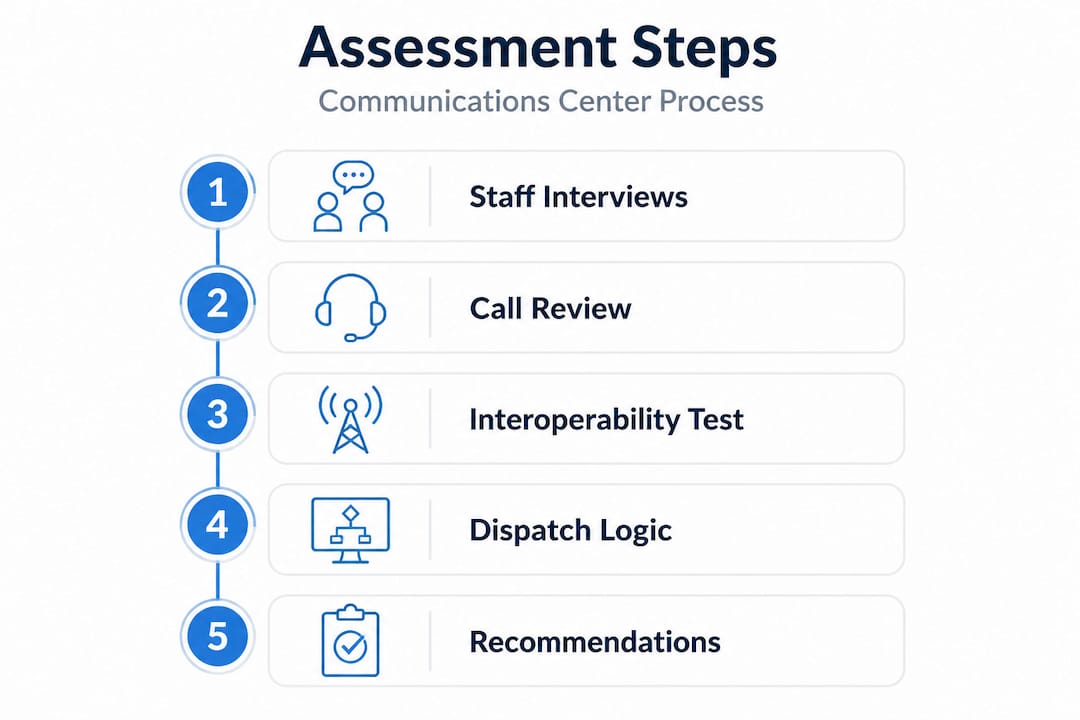

A well-executed assessment moves methodically through five core steps. Skipping any one of them produces findings that are incomplete and, in some cases, actively misleading.

Step 1: Conduct staff interviews to identify bottlenecks

Start with structured, one-on-one interviews with dispatchers, supervisors, and training officers. Ask open-ended questions about where calls slow down, which protocols create hesitation, and what technology limitations affect daily performance. Staff at the operational level often hold knowledge that no report captures. Use a standardized interview guide to ensure consistency across all respondents.

Step 2: Review call handling times, especially QS30 metrics

QS30 is the percentage of incoming calls answered within 30 seconds. It is one of the most direct indicators of center performance and is influenced by staffing levels, technology response times, and call routing logic. Pull QS30 data by shift, day of week, and call type. Look for patterns in variance, not just averages. A center that answers 95% of calls within 30 seconds overall may still be missing a critical evening shift window that affects cardiac response times.

Step 3: Test interoperability between agencies and technologies

Run live or tabletop interoperability tests that simulate multi-agency incidents. Confirm that communications channels between fire, EMS, law enforcement, and hospital systems function as documented. This step frequently reveals gaps between what’s written in the SOP and what actually happens under pressure. Our innovation profiles highlight how leading centers approach this challenge by standardizing radio interoperability protocols across agencies before crises occur.

Step 4: Evaluate dispatch logic for medical assessment versus rapid response

This is where assessment findings often become most contested. Research confirms that dispatch logic creates tension between precise medical assessment and rapid alarming. Centers that prioritize comprehensive caller interrogation may delay unit activation, while centers that activate units first may arrive with incomplete patient information. Neither extreme is correct. Your assessment should document where your center sits on this spectrum and whether it aligns with your community’s call profile and response resources.

For context on common misconceptions around dispatch logic, our dispatch logic myths resource addresses several assumptions that undermine effective assessment.

Step 5: Gather actionable recommendations and rank by priority

Every finding must produce a recommendation, and every recommendation must be ranked by impact and implementation cost. Avoid producing a report full of observations without a clear priority framework. Municipal leaders need to know what to fix first, what requires budget planning, and what can be addressed through policy updates alone.

Pro Tip: Pull a sample of 25 to 50 actual call recordings and use them to test your written protocols in real-world context. The gap between what the SOP says and what dispatchers actually do under pressure is often your most valuable finding. Reviewing EMS customer service principles alongside call recordings also reveals caller experience issues that purely operational metrics miss.

Common mistakes and troubleshooting during the assessment

Even a robust process risks missteps. Here’s how to recognize and resolve common challenges.

Municipal leaders and assessment teams consistently run into the same categories of problems. Recognizing them early saves time and protects the integrity of your findings.

- Overlooking organizational structure: Assuming that identical systems produce identical outcomes across differently structured centers is a critical error. The evidence shows that two-level filtering systems produce variable results based on how the organization is built around them.

- Assuming technology solves communication gaps: New CAD systems, upgraded radio infrastructure, and enhanced mapping tools are valuable. But they amplify good processes and bad ones equally. Technology investments without accompanying protocol and training improvements frequently disappoint.

- Failing to balance rapid response with comprehensive assessment: Centers that rush to alarm units before completing caller interrogation may improve activation times while degrading resource accuracy. The inverse is equally problematic.

- Ignoring legacy system interoperability: Older radio systems, legacy CAD platforms, and analog-to-digital transitions create compatibility gaps that only surface during multi-agency incidents. Assessment teams often overlook these because they’re not visible in routine operations. Our analysis of C-MED dispatch evolution illustrates exactly how legacy technology assumptions have created operational vulnerabilities that went undetected for years.

“The tension between precise medical assessment and rapid alarming can lead to both improved and worsened response metrics, depending on how dispatch logic is configured and whether organizational structure supports the intended design.”

Troubleshooting during the assessment requires intellectual honesty. If the data contradicts your assumptions, follow the data. Confirmation bias is a real risk when internal teams lead their own assessments, which is one of the strongest arguments for engaging external reviewers at key evaluation points.

Verification: Interpreting results and implementing improvements

After troubleshooting, verify your assessment results and decide next steps for improvement.

Interpretation is where assessments either deliver lasting value or produce well-meaning reports that gather dust. The first step is benchmarking your findings against national standards, including APCO ANS 1.101.1, NENA standards for 911 call answering, and any state-specific requirements governing your jurisdiction.

The comparison table below illustrates how organizational structure changes can produce meaningfully different outcomes in the same key metric:

| Scenario | Organizational structure | QS30 result | Recommendation direction |

|---|---|---|---|

| Center A | Two-level filtering, integrated | 100% answered in 30 seconds | Maintain structure, refine protocols |

| Center B | Two-level filtering, fragmented | QS30 declined post-implementation | Structural realignment needed |

| Center C | Single-tier, high volume | 87% answered in 30 seconds | Staffing and routing optimization |

| Center D | Single-tier, low volume rural | 93% answered in 30 seconds | Technology upgrade, not staffing |

Research confirms that two-level filtering systems produce variable answered call rate results, and your benchmarking analysis must account for your specific configuration rather than a national average alone.

When communicating results to stakeholders, present findings in three tiers: critical findings requiring immediate action, significant gaps needing budget-supported solutions, and optimization opportunities for long-term improvement. This tiered communication structure helps elected officials and finance departments understand urgency without creating unnecessary alarm.

Our EMS needs assessment framework provides a parallel model for translating assessment findings into policy-ready language that municipal leaders can bring directly to their governing boards.

Key implementation actions following verification include:

- Updating SOPs based on identified protocol deviations

- Scheduling targeted retraining for skill gaps revealed in staff interviews

- Issuing technology procurement recommendations with cost-benefit context

- Establishing a 90-day re-evaluation checkpoint to measure improvement against baseline findings

Expert perspective: Why conventional wisdom can hinder communications center performance

Here’s a candid take on what makes improvement efforts actually last.

We have worked alongside enough municipal communications centers to recognize a recurring pattern: leaders invest in a structural change or a technology upgrade, wait for performance metrics to improve, and then find themselves frustrated when the gains are minimal or temporary. The conventional assumption is that if you fix the system architecture, performance follows automatically. In our experience, that assumption is where most improvement efforts stall.

The real leverage points are leadership behavior, dispatcher training culture, and cross-agency collaboration. A center with a motivated supervisor who runs weekly case reviews and conducts regular joint drills with field crews will consistently outperform a technologically superior center where leadership is disengaged. This is not opinion. The fact that dispatch logic tensions between medical assessment and rapid alarming produce opposite results across similarly structured centers tells us that human judgment and organizational behavior are decisive variables.

We encourage municipal leaders to look at their municipal response consulting investments through this lens. Before committing significant budget to technology upgrades, ask whether your leadership development and interagency training programs are producing the behavioral outcomes that technology is supposed to support. In many cases, a six-month investment in dispatcher coaching and joint agency drills will move performance metrics faster and more durably than a CAD upgrade alone.

Pro Tip: Invest in quarterly joint agency simulation drills that force your communications center staff to work through realistic multi-agency scenarios in real time. The performance gaps that emerge in simulation are far less costly than the ones you discover during an actual mass casualty event.

Transforming your municipality’s emergency communications

Assessment is only the beginning. The real value comes from what you do with the findings.

At The Public Safety Consulting Group, we work alongside municipal leaders to turn assessment results into structured improvement programs that are operationally sound and fiscally responsible. Whether your center needs a full-scale redesign or targeted protocol refinement, we bring the expertise to bridge the gap between findings and lasting performance gains.

Our EMS strategy guide provides a proven foundation for aligning communications center improvements with your broader municipal EMS strategy. For communities looking at system-level redesign, our EMS design examples illustrate how comparable municipalities have achieved measurable gains in response times and resource utilization. And if you’re ready to take the next step, our public safety assessment process gives your team a clear, structured path from evaluation to implementation. Contact us today to discuss where your communications center stands and where it needs to go.

Frequently asked questions

How often should a municipality assess its emergency communications center?

Most municipalities should conduct a comprehensive assessment every two to three years, or immediately following any major incident, significant staffing change, or technology migration. Waiting longer than three years between reviews increases the risk of compounding gaps going undetected.

What metrics should be prioritized during an emergency communications center assessment?

Critical metrics include call answer time at the 30-second benchmark (QS30), dispatch accuracy rates, and interoperability test results across agencies. Research shows that two-level filtering systems impact QS30 differently depending on organizational structure, so context matters when interpreting these numbers.

What is the main challenge in balancing rapid response and precise assessment?

The core difficulty is that dispatch logic creates tension between thorough medical interrogation and fast unit activation, and centers must calibrate their approach to match their community’s call profile, resource availability, and clinical protocols.

Can technology upgrades alone solve communications center inefficiencies?

Technology upgrades address infrastructure limitations but do not resolve protocol deficiencies, training gaps, or organizational culture issues. Lasting improvement requires aligning technology investments with staff development and process refinement at the same time.

Recommended

- How To Assess Municipal EMS Needs For Better Response

- Master public safety crisis communication workflow in 2026 – The Public Safety Consulting Group

- 6 Essential Public Safety Communication Tips For Leaders

- 911 Communications Consulting: Transforming Public Safety Response

- Build your family earthquake communication plan in BC – EarthquakeKit.ca

- Effective Communication Tips for Membership Organizations|CS